What Is Authorship When Machines Can Write?

A few years ago, it would have seemed bizarre to have to state that I wrote every word of my new book. After all, who else would have written it? Yet when I began the process, three years into ChatGPT’s existence, the question was not who else but what else. Artificial intelligence has become deeply embedded in countless industries from healthcare to finance to retail and beyond. And the prospect of computers becoming tools that don’t just augment human creativity and intelligence but replace them altogether has moved from the realm of sci-fi to the front pages of newspapers.

At first blush, these headlines would appear deeply urgent and unprecedented — and on one level, they certainly are. But on another level, the concerns of such headlines have a much longer history than meets the eye. In fact, one could argue that AI agita predates AI itself.

In 1953, British author Roald Dahl published a collection of stories titled “Someone Like You,” which included a prescient piece called “The Great Automatic Grammatizator.” Its plot revolved around the machinations of Adolph, who is both a brilliant electrical engineer and a frustrated writer with stacks of rejection letters. Knipe persuades his boss that a fortune could be made by building an “automatic computing engine,” which produced acceptable, if not great, works of fiction. Soon, the machine was spewing out dozens of mediocre stories, which Knipe sold at a rate that undercut the production of human writers. Eventually, Knipe establishes a near-monopoly by offering would-be authors a “golden contract” that, in exchange for using their names, paid them not to write. The story concludes with a vignette of a poor and struggling writer who has (so far) refused to sign Knipe’s agreement. “Give us strength, oh Lord,” he prays, “to let our children starve.”

Dahl’s short story reflected the events around him. The same year Dahl’s story appeared, Christopher Strachey, a scientist at the University of Manchester, had coaxed a Ferranti Mark 1 computer to make random selections from a vocabulary of some 70 words. The result was short love letters, produced via a programmed algorithmic process, containing sentences such as, “My sympathetic affection beautifully attracts your affectional enthusiasm. You are my loving adoration.” While the poetry was rather poor, the products were intelligible and the combinatorial possibilities numbered in the tens of billions.

Whereas Dahl had described a dystopia in which computers prodded human authors into unemployment or worse, Italo Calvino intuited a different future. In a 1967 lecture titled “Cybernetics and Ghosts,” the Italian writer recognized that “electronic brains,” although still limited in ability, would soon provide a “convincing theoretical model” for the “most complex processes of our memory, our mental associations, our imagination, our conscience.” Calvino wrote his lecture amid a flurry of influential research in linguistics, semiotics, and literary criticism by figures such as Noam Chomsky, Umberto Eco, and Jacques Derrida. Scholars, Calvino explained, were “beginning to understand how to dismantle and reassemble” language. At the same time, engineers were building machines that could more capably recognize words, read sentences, translate passages, and produce summaries of scanned texts.

Linking these developments, Calvino concluded it was inevitable that soon machines would be “capable of conceiving and composing poems and novels.” His premise was essentially that writing was a “combinatorial game” in which authors, consciously or not, followed certain rules as they assembled words to make texts. An author’s “genius or talent,” then, was nothing more than “finding the right road empirically,” albeit a process certainly advanced by experience and self-confidence. “When things are going well,” Calvino said, an author is just operating optimally as a “writing machine.”

One could argue that AI agita predates AI itself.

In 1981, an obscure new author put some of Calvino’s imaginings down on paper, so to speak. Bob Guccione and Kathy Keeton, the publishers of Omni, a magazine that blended popular science and science fiction, included a short story called “Soft Ions” in one of their issues. Credit for the story was given to an entity called “RACTER,” a software program developed by William Chamberlain, a freelance writer, and Thomas Etter, a programmer. Their creation’s name was an abbreviation of “raconteur,” as their modified BASIC program only allowed six-character file names. “Soft Ions” was the “first experiment in computer-generated science-fiction writing.” Omni’s editors explained that RACTER “picks nouns and verbs, adjectives and adverbs at random” but then “strings them together according to rules of grammar” that Chamberlain and Etter had rendered into computer code.

RACTER was later credited with producing a book of poetry and stories called “The Policeman’s Beard Is Half-Constructed.” (The title was derived from one of the program’s first statements.) As with “Soft Ions,” RACTER’s prose blended the comical with the absurd. For example, after introducing two characters named Bill and Sarah, RACTER said that they “chant of their difficulties and their happiness. They have love but they also have typewriters.” Perhaps more profound was RACTER’s observation that “reflections are images of tarnished aspirations.”

RACTER soon caught the attention of A. K. Dewdney, who wrote a column for computer hobbyists that appeared in Scientific American. Dewdney was “charmed” by the text-generating program’s utterances even as he found himself “on the brink of a completely unknown mental world, one that I would prefer not to enter.” Dewdney put RACTER in conversation with a version of computer scientist Joseph Weizenbaum’s ELIZA program, an early chatbot that simulated the role of a psychotherapist. The result — which Dewdney characterized as a dialogue between a “rather unimaginative psychiatrist” and an “extroverted, manic, possibly dangerous patient” — included passages such as this:

ELIZA: Please state your problem.

RACTER: Excellent! Excuse me a minute while I attend to some business. I’ll be right back.

ELIZA: Why do you need to tell me to excuse you while you attend to some business?

RACTER: Is it better to need or to despise? Because electrons are brave. Braveness is nice, don’t you think? And the needers all insist upon it. Do you imagine I am a Communist?

Dewdney noted that “conversational programs” like RACTER pointed to a future in which computers would be able to “discuss problems intelligently with humans” rather than “passively accepting” the programs’ users provided. One outcome, he concluded, might be a “wildly associative mind” that borders on “artificial insanity.”

The scenarios Dahl, Calvino, and others imagined were portents of today’s anxiety and fear about the threat that such “writing machines” pose not just to human authorship but to humans’ relationship with texts themselves.

The spark that lit the present fire was the seemingly sudden emergence of quasi-artificial intelligence programs based on concepts such as “deep learning” and “large language models.” With these methods, computers are given massive amounts of digital data, which they then sift through to identify patterns. A classic example often used to illustrate the point is providing a computer system with thousands of images of cats. Eventually, with enough time, data, and processing power, the system can begin to recognize features associated with felines.

An exemplar of this approach is ChatGPT, a type of “transformer language model.” These programs learn how language “works” by relying on complex statistical methods and access to massive amounts of written text, including hundreds of thousands of published books and articles, many of which were pirated without permission. Once trained, chatbot programs such as ChatGPT can use predictive modelling — imagine a radically more powerful version of your smartphone’s autocomplete feature — to generate text in response to prompts. One result is a software application that can realistically (sometimes) replicate human conversation.

What does it mean when machines can competently create prose, poetry, or software programs?

ChatGPT-1 was released by programmers at OpenAI in 2018. Funding came from a group of tycoons who pledged over $1 billion to support the development of artificial general intelligence systems — defined as “autonomous systems that outperform humans at most economically valuable work” — which were “safe and beneficial.” Existing journalistic accounts suggest OpenAI’s formation was catalyzed in part by a general fear among the organization’s founders that future artificial intelligence systems, especially those developed by profit-minded corporations, would pose an existential threat to humanity — a fear shared by philosopher Nick Bostrom in his 2014 book “Superintelligence: Paths, Dangers, Strategies.”

Bostrom’s concerns come from a long lineage. Indeed, ChatGPT is the sort of thing that would have fascinated and repelled Norbert Wiener, the MIT computer scientist and mathematician who warned of the dangers of replacing people with machines as far back as the 1940s.

It is hard to imagine Weizenbaum, too, being anything other than appalled by the new technology. He would have been especially dismayed by the propensity of large language models to fabricate misinformation (a phenomenon computer scientists charitably call “hallucinations”) or to regurgitate the often noxious and hateful biases and prejudices found in the program’s training data. Weizenbaum was especially troubled by how programs like ELIZA could easily fool users into thinking they were interacting with a sentient machine. Even more problematic was his sense that people wanted to be deceived. He reserved special reproach for the computer scientists whose work introduced falsehoods into the world.

“One shouldn’t lie,” Weizenbaum once said, an injunction the programmer-turned-critic surely would have directed at contemporary AI researchers and some of their software creations.

It is virtually impossible to predict how technologies such as large language models will affect societies and economies in the years to come. Picture someone reading Edmund Berkeley’s book, “Giant Brains,” in 1949. It would not have been very hard for that person to accept the book’s supposition that computers would eventually become microscopically smaller, more powerful, and pervasive. That same reader would also have been stunned to learn that computers would completely upend, for example, book publishing or the film and music industries.

When he was writing “Giant Brains,” Berkeley surely found himself in a conundrum: The technology he was writing about was changing so rapidly that some of what he included in his book about the contemporary state of computing was already out of date by the time it appeared. Writing about large language models and chatbots today poses just the same hazard. It is quite likely that everything I have written in my own book about chatbots will be out of date by the time you read this.

Nonetheless, the changing symbiosis between people and computers compels us to consider a topic at the heart of many contemporary discussions about programs like ChatGPT: the author’s creativity. Originality and inspiration are, of course, of central importance for any writer, artist, musician, or, for that matter, computer programmer. As a value and a trait that often eludes definition and quantification, creativity has been cultivated and studied for decades by psychologists, engineers, and advertising executives.

What does it mean when machines can competently create prose, poetry, or software programs? Are computers displaying a sense of creativity? Are they functioning as authors? One glib answer, harking back to Strachey’s experiments at the University of Manchester, is that machines have already been doing this for decades.

In 1965, John R. Pierce — a renowned electrical engineer at Bell Laboratories and also a published science fiction author — described how researchers were using computers to make visual artworks, compose music, and write original compositions. Soon after, an art exhibition in London featured an array of computer-generated works, including programs that produced pseudo-Japanese haiku and “high-entropy essays” mimicking undergraduate papers. Critics in the 1960s looked at these computer-generated creations and unswervingly asked, “Is this art?” or “Does it count as literature?”

Human activities that we think distinguish thinking from computation are constantly in flux.

In Dahl’s “Great Automatic Grammatizator,” the fictional Adolph Knipe conceded that “however ingenious” a computer may appear to be, it is still “incapable of original thought.” Many people today might argue otherwise. Generative AI software was a central point of contention in the Writers Guild of America strike that shut down television and film production in 2023. A central part of the labor dispute’s settlement was the proviso that AI tools “can’t write or rewrite literary material” and also that film studios must disclose whether any materials given to a writer were “AI-generated.”

At the same time as writers were walking the picket lines, a cohort of prominent authors filed a legal complaint protesting OpenAI’s unauthorized appropriation of tens of thousands of digitized books (including some of my own) to create a robust dataset used to “train” ChatGPT. One might even go so far as to say that contemporary chatbots are not “writing” but rather engaging in a sophisticated form of plagiarism (or bullshit generation). Besides treating previously published works as a stolen resource to be mined, the seeming ease with which chatbot programs produce results obscures the human labor — often done by low-paid workers in places far removed from Silicon Valley — necessary for them to work properly.

Meanwhile, the proliferation of chatbots has also made it easier for “authors” to “create” hundreds of books with “their” name on the cover. One astute observer suggests we are entering into a “textpocalypse,” in which computer-generated writing overwhelms the internet with “synthetic text devoid of human agency.”

Computers certainly have the potential to alter the nature of what it means to be an author. But what this will actually look like in the years to come is hard to say. After all, a “computer” today bears little resemblance to the machines that Edmund Berkeley or Joseph Weizenbaum tinkered with in their time. If history tells us anything, it’s that the human activities that we think distinguish thinking from computation — like writing and reading — are not fixed but contingent and constantly in flux.

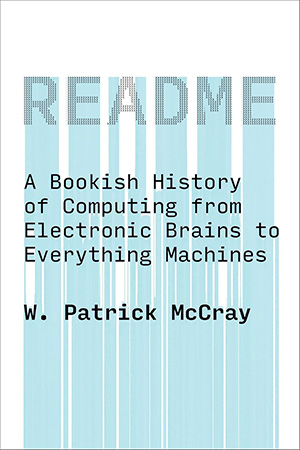

W. Patrick McCray is Professor of History at the University of California, Santa Barbara. Originally trained as a scientist, he is the author or editor of eight books, including “README,” from which this article is adapted.